[et_pb_section admin_label=”section”][et_pb_row admin_label=”row”][et_pb_column type=”4_4″][et_pb_text admin_label=”Text”]

So what has a burger on a beach got to do with photogammetry? I worked on this commercial for mcDonalds below and we needed to add animated hand-drawn photorealistic paint (suncream) on several actors faces. So we captured the models of the actors using photogammetry and then did the job just in Nuke, with no 3d rendering or modeling.If you want to read how we did it read the breakdown at the bottom of this post

Marc Reisbig – Mcdonalds 30" from FIELDTRIP on Vimeo.

SOFTWARE COMPARED

I tested various different photogammetry software including 123D Catch; Photoscan, VisualSFM, Meshlab, ARC 3D. We did find the services that you upload your images to initially got the best result, but we had to discount anything that could not be used commercially. That left Photoscan and Autodesks 123D catch. 123D catch initialy came back with the best results but then we realised that this was because it was more forgiving with badly taken photographs. If Photoscan was provided with good clean imagery it came back with more accurate data, then we also discovered that one can use less accurate photos as long as one sets the Depth Filtering to ‘aggressive’ and the quality setting to low. Then we got similar results to 123D catch but with added control . The main downside in comparison was that Photoscan took alot longer to compute the pointcloud.

METHODOLOGY

Taking accurate photos for photogametry has more specific requirements than panoramic photography. The main issues being that you need over twice the coverage (even more when panning) and the images recognition patterns must be as identical as possible, this is more challenging because the camera move in non-nodal. Focus, noise, reflections and flat areas of no detail all pose problems. I’m not going to go into this here as these other sites have covered this well:

- http://www.tested.com/art/makers/460142-art-photogrammetry-how-take-your-photos

- http://www.agisoft.ru/pdf/Image%20Capture%20Tips%20-%20Equipment%20and%20Shooting%20Scenarios.pdf

- http://www.agisoft.ru/pdf/photoscan_pro_1_0_en.pdf

- http://www.agisoft.ru/wiki/PhotoScan/Tips_and_Tricks

In the right lighting conditions photogammetry is very good at three specific scenarios: models, façades and aerial photography of buildings.

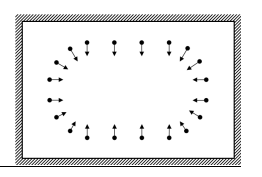

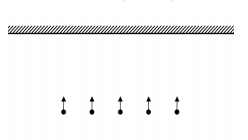

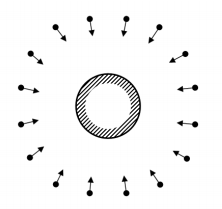

The photos of objects or façades need to be taken like this :

Here are some good examples on sketchup of photogammetry models:

INTERIORS

Where it does not do well is interiors, particularly plain walls or objects in a room (like furniture) that has not been separately modelled.

You can see from these models below where lack of texture or there are small foreground objects in a room it fails.

However the stone texture and empty room of the last one works okay:

Primary school classroom – 3d scan

CONCLUSION

The advantage of photogammetry over lidar is that it is cost effective, usable in drone photography and with some software can provide a 32bit texture. The disadvantage is that as yet it does not work well for large scenes that require small details. Also the photos need to be taken in specific lighting conditions to work best, that lidar does not need.

For VFX what photogammetry is useful for is

- rough onset models to assist modelling placement

- background set extension and modelling of buildings

- background set extension of landscapes

- static objects that don’t require rigging

BREAKDOWN OF THE WORKFLOW FOR THE McD ADVERT

We didn’t have time or money to model, light and render photorealistic 3d, so apart from retopologizing some UV’s this advert never saw a 3d program like Maya. The animation was done in flash and then using models of the actors extracted from some photos we were able to do this whole job in 2d with Nuke. The other big advantage of this was that when the client wanted to go back to animation of a shot a day before delivery, it was less than a few hours work including rendering for us to do the changes

Basic Workflow

The actors were photographed in a white tent on swivel chairs from three different hight positions.? We then extracted the model and the texture with Photoscan, retoplogized the uv’s. Tracked the actors heads (but exported a moving camera, rather than a moving model to make it cheaper in nuke and easier to work with textures). The animation was done in flat and unwrapped in flash and then we handpainted the brush stoke textures and outputted the brushstokes as an alpha.

Modelling in Nuke

In nuke I brought the model, texture, animation texture and camera. To make things faster i pre-baked a 5k Normal map; unwrap UV and wrap UV so the textures applied could be rendered onto the face quickly without using the model with a scanline render. Fine detail such as pimples were missing from the model because of movement in the head as they were swivelled round when photographed. This fine detail along with detail like the paint stoke ridges was added using a bumpNormal process by colour correcting the normal map (these tools I d/l from nukepedia also with the rotate normal tool).

Lighting in Nuke

The fine detail from the bumpNormal process was added using the relight node matching the position of the light in the plate to the sun. I installed a 2d ambient occlusion plugin from nukeapedia to help with shadows, and then extracted spec, diffuse and shadow from the unwrapped plate and added or subtracted to the animation texture.

Using this process we got photo-realistic shading that could be controlled in the same way one might in 3d, but each shot took no more than 30 min to render, and nuke was responsive enough for me to work with the supervisor making decisions as one might in flame. credit goes to Tim Kilgour at Fieldtrip who was the vfx supervisor on this ad.

[/et_pb_text][/et_pb_column][/et_pb_row][/et_pb_section]

Leave a Reply